Some thoughts on AI and college and career decisions

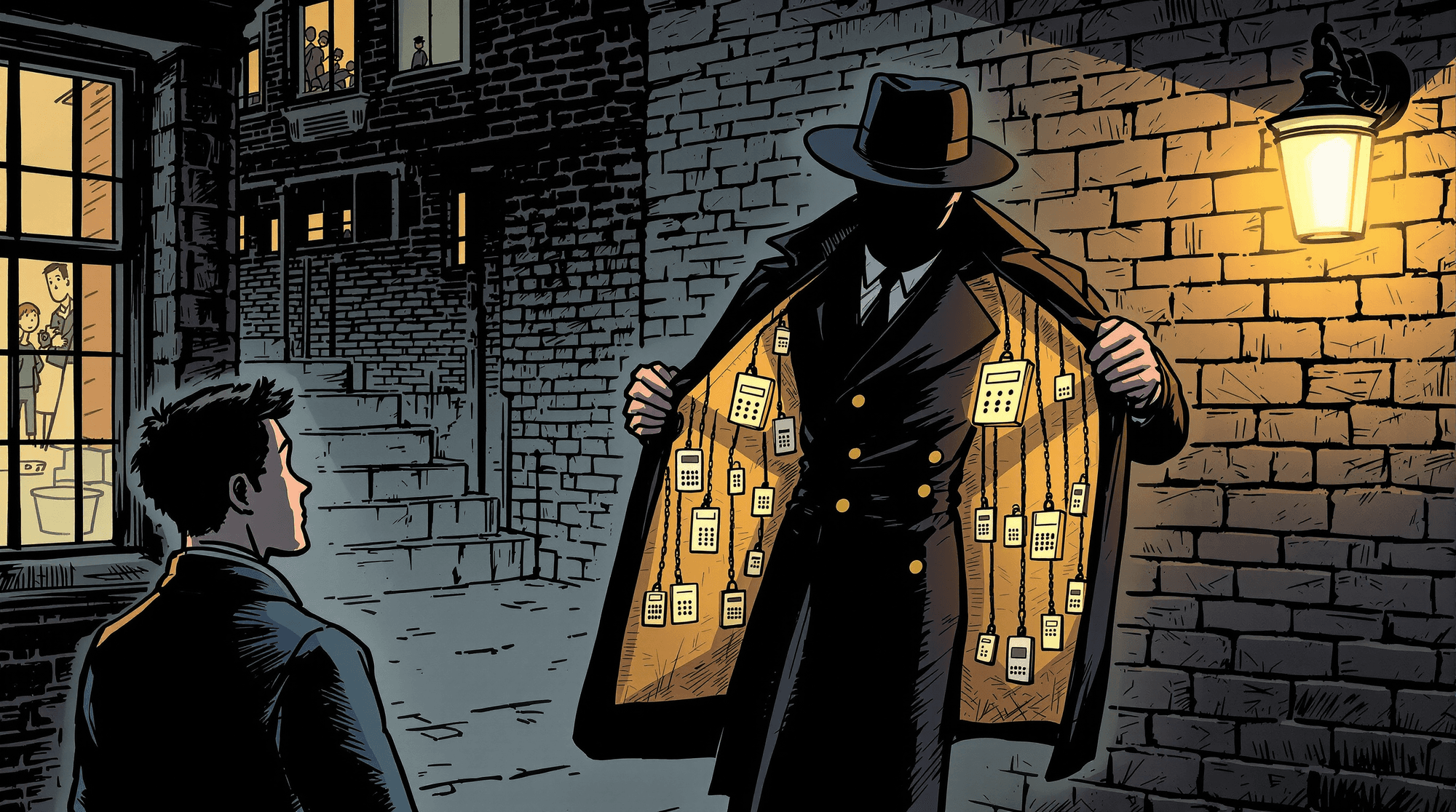

AI is already in college and career decisions. The question is whether it bridges the structural advising gap families face — or drives it farther apart.

This week we're going to shift gears a bit and talk about the macro state of play around AI, College Decisions and all things in-between. To start, I'm going to share a different type of data: Those of my website.

Specifically, the AI citation log Microsoft sends me from Bing Webmaster Tools. Over 29 days — March 26 through April 23, 2026 — Microsoft's AI tools cited collegeazimuth.com 11,143 times.

This site is a side project. I built it to explore my own analysis and post the threads on LinkedIn. There is no marketing operation behind it. There is no PR firm. I have not run a link-building campaign. My Domain Rating is a balmy 0.00 out of 100. To put that in context, typical college websites (College Board, US News, Niche) sit at DR 80–90+. Major individual school sites typically run 50–80. By every traditional measure of web authority, this site is at the floor.

And yet, a few months into posting, Microsoft's AI is treating it as a citation source eleven thousand times in a month. In some respects this is likely a drop in the bucket of what is going on out there, and I'm going to use this as an opportunity to talk about why I think that's significant and what that means.

Bing's report shows me only the most-cited queries, not all of them. The queries Bing does surface account for 13% of the 11,143 total citations. In general, what we do see is that the queries are specific, high-intent questions — the kind a student or parent or counselor asks when they're trying to understand the actual choices in front of them.

Here's the breakdown of what's visible:

| Category | Citations | Share |

|---|---|---|

| Cost / affordability / tuition | 460 | 32% |

| Career outcomes / earnings / job placement | 448 | 31% |

| Majors / programs at a specific school | 146 | 10% |

| Regional best-of (e.g., "best engineering school Midwest") | 136 | 10% |

| Peer comparison / "alternatives to" / "similar to" | 119 | 8% |

| Overall ranking | 107 | 8% |

| Other | 19 | 1% |

Two-thirds of what we see is some version of "what will this actually cost me, and what will I earn afterward?" — exactly the questions where a wrong answer translates straight into a wrong financial decision.

This isn't really that surprising. I started College Azimuth precisely because I knew financial considerations were a big driver for many families, and because the information out there really wasn't very good. It's just the volume, and how quickly its ratcheted up. So, what really is going on here?

The structural time gap that can't be filled.

The conversation around AI in college and career advising has been picking up steam over the past year. Last summer the U.S. Department of Education named "AI for College and Career Pathway Exploration, Advising, and Navigation" as one of three federal AI priorities in its Dear Colleague letter. EAB's most recent national survey (February 2026) found that 46% of high school students are now using AI in their college search — up from 26% just six months earlier. 18% have already removed a college from consideration based on something AI told them. 39% say AI is pushing them to consider not going to college at all.

I throw the 11,143 number into that conversation to make a single argument: AI is here in this space whether we like it or not.

It makes sense, of course. The American School Counselor Association has recommended a 250:1 student-to-counselor ratio since 1965. Sixty-one years of the same ask. Only four states meet it today — Vermont, New Hampshire, Hawaii, Colorado — the other forty-six are short of an aspiration older than most of their counselors.

For students, based on the he most rigorous publicly available estimate I could find — NACAC's State of College Admission, 2005 — counselor time is pegged at 38 minutes per year per student on personal college admissions guidance in public high schools. Roughly half an hour, once a year, on what is for most families the most consequential investment decision of their life. The same report finds public-school counselors spend 28% of their time on college counseling, against 61% for private-school counselors. The kids who most need the help get the least time on the question.

That being said, we can’t ignore some of the broader data trends that indicate improved ratios and greater advising frequency. More recent data from ASCA shows a range of 195-224:1 for high schools, meeting the ASCA recommended ratio for the first time. This, plus the expansion of advising roles as a way to complement counselor capacity signals a positive trend. Check out a report on Tennessee’s state funded Advise TN, a statewide counseling program.

However, even with this progress, individualized support remains a math problem and a class problem

So of course students go elsewhere to work it through. They always have. Historically that meant parents, friends, an older cousin who went to State, the neighbor who came back from college and seemed to know what they were doing. I've talked a lot about my Army Recruiter who was the only person who had the time, and ability to talk to me productively about the paths that were open to me.

This isn't just a convenient narrative device I trot out, Britebound’s Teen Next Steps report found that parents are the biggest influence on students’ postsecondary plans, followed by another family member.

Now that supplementary network also includes Claude and OpenAI. That's the only thing that's actually new.

The implications of AI in the decision making world.

I view this as both an opportunity and a risk.

The opportunity is real. How many of us know someone who got bad advice on a college or career decision from a relative or friend who simply didn't know what they were talking about? How many of us watch families default to prestige-driven rankings — US News, mostly — for no better reason than that's what's available?

How many of us see students miss important information about which institutions are better for specific programs and specific student career interests?

In theory, AI can change that. A student in a rural high school with one overworked counselor and a parent who didn't go to college can ask a question and get back something resembling a thoughtful, contextualized answer — grounded in real data, tailored to her situation, available at 9 PM on a Tuesday when the question actually lands.

That's the opportunity around all of this.

But it only works if the data itself is good, and if the context is used well. It also only works if AI can find the Goldilocks zone of advising that master counselors have perfected after years of conversations with teenagers. Not too deferential: “Go anywhere you want!” Not too authoritative: “Based on your academic profile, career interest quiz, and financial needs, your options are these 2 community colleges.”

Let me give you a personal example of how it can go wrong. I use AI a fair bit in my own research — I built an agent on top of my database to help me code analyses faster, and I lean on AI to do first-pass lit reviews when I'm preparing a piece. This piece was no different. I asked the AI to scope this story for me, including verifying some of the load-bearing claims I planned to make. How often do high schoolers use AI in their college search? How much of a counselor's time goes to college counseling? How much time per student per year? Standard stuff.

The AI came back with confident, specific numbers attributed to named sources. It cited Bowdoin's Hastings AI Initiative for a "70% of teens have used genAI" figure. It cited NACAC for a "24% public / 52% private" counselor-time-on-college split. It traced the foundational "38 minutes per student per year" figure to a 2011 College Board / NOSCA report.

Three of those four sourcing claims were wrong. Just completely made up, but the AI sounded confident in their response.

I pushed back. The Bowdoin "70%" turned out to be from a synthesis report — a literature review — not original research. The actual primary source, a NORC-conducted survey from Common Sense Media, Hopelab, and Harvard's Center for Digital Thriving, has the headline at 51%, not 70%. The "24% / 52%" counselor-time numbers don't appear in any primary source I could find. The actual NACAC numbers, from Table 58 of the 2005 State of College Admission report, are 28% public / 61% private. And the 38-minute figure isn't from the 2011 College Board paper at all. It's in NACAC's 2005 State of College Admission, page 119, derived from older NCES data. Different publisher, different year, different document.

It took three rounds of pushback and four primary PDFs pulled directly to fix all of it. In the end, I just asked my buddy Andy what the answer really was (more on this below). My own experience parallels a similar experiment funded by Renaissance Philathrophy which ran a structured evaluation of LLM career advice and concluded that if these tools were graded like students, most would get a C.

I've spent about a decade with this kind of data. I know what to push on, I have time, and I know where the primary sources live. A 17-year-old asking ChatGPT "how much will this school actually cost me, and what will I earn after?" has none of that. The errors I caught here are exactly the errors that would otherwise ride — straight to a kitchen table somewhere on a Tuesday night.

It’s the errors plus the emerging research on AI as always-affirming and sycophantic that present the most risks for AI in the college and career guidance space.

So the question to me is much less "Is AI good, or Is AI bad", or "should we use it" or "should we not". It's moreso, it's here. So what are we going to do about it.

So what does it actually look like to do this differently?

I've been exploring some ways to integrate my data backend with others doing the work — the people who advise students, build the tools, run the programs. Looking for others exploring AI in this space to work together, share notes, and figure out how to make it work in practice.

One example. I've been working with Andy Schmitz to help him build his own custom GPT using my data backend. He brings the practitioner side — the rules, the workflows, the questions that matter to the students he works with. The data layer handles the grounded numbers underneath. The result is a tool that fits his actual practice, with answers tied to real data, without him needing to know anything about how College Scorecard, IPEDS, BLS, and Common Data Set filings get reconciled across years.

That's the model I'm interested in. And it points at something specific.

To do this right, every single AI tool that wants to be useful for college decisions has to have at least two things:

1) Specific and grounded context, frameworks, and processes that account for the myriad approaches that good counselors employ around the country that we know works. A generic "how to think about this approach" that tries to thread the needle between a student on Chicago's South side, and a student in Kentucky will lilkely not be very useful for either.

Ideally, this is where people in the space like Andy or Habib at Urban Assembly who know what they are doing can step up and take the lead. This isn't gonna be an issue solved by tech bros in isolation of the knowledge that real educators have.

2) A dedicated back end infrastructure— net price by income bracket, parent borrowing data, graduate earnings by field of study, labor market signals by state.

Doing that well runs a few hundred thousand dollars in build cost, plus six figures a year in maintenance to keep it current. And that's before you find the people who can do the work right: someone who can specify what correct means, someone who can build it, and someone who owns the methodology when the federal government changes a definition mid-year. That combination is uncommon, and especially rare at the organizations where students most need the data.

Every AI tool building this independently is the field paying the same cost over and over. This is where I am hoping the ecosystem can set something up (or I guess I can) so educators who will never be data engineers don't have to raise half a million dollars and wrangle with consultants to bring their expertise to the table.

A shared data layer would let everyone focus on their students instead. There are roughly three levels of integration to think about:

| Level | What it is | What it does |

|---|---|---|

| 1. Knowledge layer | Documented frameworks, methodologies, data dictionaries | Any AI tool can incorporate it directly into its prompt or training. No integration required. |

| 2. Tool API | Standardized endpoints — like a Financial GPS for college affordability — that apply the frameworks | The model handles the conversation. The data layer handles the numbers. Works via standard function calling or MCP. |

| 3. Data infrastructure | A combined database of national outcome data, BLS labor market statistics, and individual school data (Common Data Sets) | The bedrock layer everything else sits on. |

What I'm looking for

I'm looking for two kinds of partners — and some people will be both.

The first is organizations doing interesting work with AI in college and career advising who are willing to talk about it. How are you integrating data into your practice? What's working? What isn't? What do you wish you had?

The second is organizations that want better data infrastructure underneath their AI tools and are willing to work with me to build it out for others to use. What you get: the shared data layer applied to what you're already doing — making it more grounded, more defensible, cheaper to maintain. What I get: real-world insight into how advising organizations actually use data and AI to help students, which is what shapes what gets built next.

If your organization fits either description — or both — I'd like to talk.

Daniel@collegeazimuth.com